This month’s image inspiration is community member Sami Jawhar. Sami has contributed to DVC in the past and most recently to the DVC and CML teams with regard to extending our remote experimenting features to include running experiments in parallel, which you can check out here and [here](https://github.com/iterative/terraform-provider-iterative/compare/master…sjawhar:terraform-provider-iterative:feature/nfs-volume. Look out for him speaking at a Meetup soon on this topic!

Last year Sami presented at one of our Office Hours meetups on “What is an experiment?” More specifically he asked, at what level of granularity do you experiment and when do you share with your team? He shared great ideas, tips, and code in the session and spurred a great discussion with other community members. We look forward to the next Meetup!

✨Image Inspo✨

Table of contents

Table of Contents

As the summer fades and we get revved up to finish off the year, we start the September Heartbeat with some juicy food for thought AI topics.

From Greater AI/ML Community

Meta Is Building an AI to Fact-Check Wikipedia—All 6.5 Million Articles

They’ve built an index of web pages that are chunked into passages and then provide an accurate representation of the passage to train the model. Their aim is to more accurately capture meaning as opposed to word pattern. From Fabio Petroni, Meta’s Fundamental AI Research tech lead manager:

[This index] is not representing word-by-word the passage, but the meaning of the passage. That means that two chunks of text with similar meaning will be represented in a very close position in the resulting n-dimensional space where all these passages are stored.

They hope to ultimately be able to suggest accurate sources and create a grading system on accuracy. You can find a demo of the project, named Side, here to look at samples and go deeper into the research. They are looking for people to give feedback on the quality of the system.

Vanessa brings up some great questions regarding this:

If you imagine a not-too-distant future where everything you read on Wikipedia is accurate and reliable, wouldn’t that make doing any sort of research a bit too easy? There’s something valuable about checking and comparing various sources ourselves, is there not? It was a big leap to go from paging through heavy books to typing a few words into a search engine and hitting “Enter”; do we really want Wikipedia to move from a research jumping-off point to a gets-the-last-word source?

To these I’d add, what’s Meta’s/Amazon Alexa's monetary motivation to do this (because there always is one), and given past ethical infractions on Meta's part ( 1, 2, 3, 4, and 5,) should we applaud this? Or is this collaboration with Universities a step in the right direction?

European AI Act

The article covers critiques of the Act from Alex Engler of think tank Brookings through this piece. While Oren Etzioni, the founding CEO of the Allen Institute for AI adds that such regulation could create an undue burden where only large tech companies could comply:

“Open source developers should not be subject to the same burden as those developing commercial software. It should always be the case that free software can be provided ‘as is’ — consider the case of a single student developing an AI capability; they cannot afford to comply with EU regulations and may be forced not to distribute their software, thereby having a chilling effect on academic progress and on reproducibility of scientific results.”

The article discusses some proponents to the Act, as well as alternative thought processes on the granularity of regulations (product vs. category, or downstream responsibility). Finally, it ends with some thoughts from Hugging Face CEO, Clément Delangue and his colleagues' comments on the vagueness and the problems that can arise out of this lack of clarity, including stifling competition and innovation. They also point out the growing Responsible AI initiatives such as AI licensing and model cards outlining the intended use of such open source technology as positives that are community-born.

So does regulation stifle technology or provide guard rails?

My colleague Rob de Wit would like to point out that similar concerns were raised when the EU introduced the GDPR in 2016, which has turned out to be of major importance to people's rights to privacy — in the EU and worldwide.

To what degree should AI technology be regulated? Where do you draw lines? It’s quite clear that it moves faster than lawmakers can keep up with and the potential for harm is well known at this point. We could say, as I believe, that reflection on the consequences should be baked into the building process. However, the reality in practice is that —despite best intentions— the overarching push for better and faster often results in negative consequences that are only discovered after the fact.

How do we incentivize reflecting on consequences in our processes? Would regulation force this? Make development slower, but necessarily force the social good work that must be done in the development of AI tech?

What other industries have similar dilemmas and how do they handle it? The

Hippocratic Oath has served medicine well for thousands of years.

Do We Need a Hippocratic Oath for Artificial Intelligence Scientists?

Pulse Check

We would love to hear (read) your thoughts on this! We are starting a “Pulse check” topic from the Heartbeat each month up for discussion in our Discord server in the General channel. Come join the discussion!

Iterative Community News

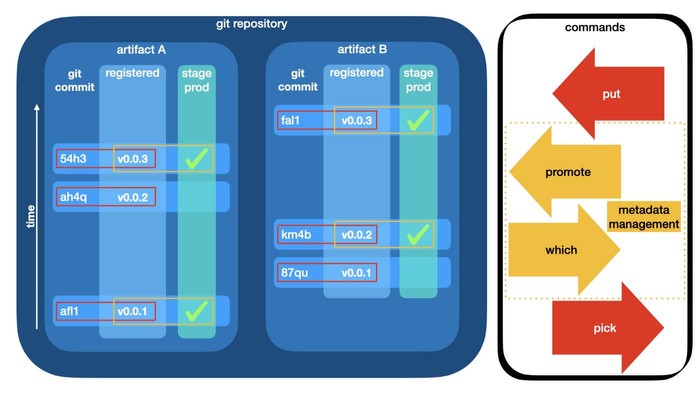

Francesco Calcavecchia - We refused to use a hammer on a screw: Story of GTO-based model registry

Francesco Calcavecchia wrote a piece in Medium about building a custom model registry with GTO.

He acknowledges the main reasons for needing a model registry as:

- When you need model versioning

- When you need to promote or assign models to different stages

- When you need to establish production model governance

Additionally, he finds registering the data analysis and model evaluation outputs into an artifact registry is necessary, and as such used GTO and DVC to accomplish this. He goes into more detail about why he chose GTO over MLFlow - essentially appreciating our UNIX philosophy that empowers agility over prescriptive methods that hamper your design choices. He notes:

It is hard to think of something simpler than this. And simplicity is beauty ❤️

He then discusses some things he found missing for his needs, such as using it

in a production pipeline as opposed to committing models by hand. He discusses

working on solutions to build the artifact registry, introduce new commands, and

streamline the process for the dvc push remote storage secret requirements.

Please join him in his contributions. We love to see where this is going! 🚀

MLOps Course at the Technical University of Denmark includes DVC and CML

- Getting started

- Organization and version control (find Git and DVC here)

- Reproducibility

- Debugging and logging

- Continuous X (find CML here)

- The Cloud

- Scalable applications

- Deployment

- Monitoring

- Extra Resources

The materials are great and even include some funny memes. Isn't an open-source model amazing for learning? Cheers to DTU for including our tools and the open source sharing of these learning materials with the world!

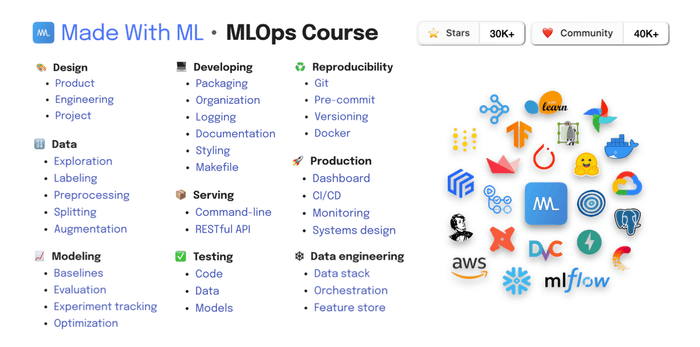

Goku Mohandas - Made With ML MLOps Interactive Course

You likely already know of Goku Mohandas'

wildly popular free course Made with ML, which

includes DVC. Knowing that it can be challenging to learn everything on your

own, he is starting an interactive class beginning on October 1st. The deadline

for application is September 25th.

For more info find the details here.

Adrià Romero - YouTube review of DVC

Adrià Romero, Computer Vision Developer at Lakera, has a regular tool review on tools that can make computer vision easier, and recently reviewed DVC. He does a demo of DVC pushing up to a Google Drive remote and goes over how to share DVC-tracked data. He then covers the data pipelines functionality that can be used for CI/CD pipelines and shows the benefits of tracking the versions of everything including data, models, pipelines, parameters, and experiments. Finally, he mentioned that our documentation is super clear and useful, which makes us very happy. 🦉Check out the review below.

Sydney Firmin - Reproducibility, Replicability, and Data Science

Sydney Firmin writes a wonderful piece in KD Nuggets outlining the replicability crisis, the importance of reproducibility in science in general and data science in particular. She highlights the growing awareness of irreproducible research due to technology's help to make all research better circulated. She encourages standardizing a paradigm of reproducibility in data science work to promote efficiency, accuracy, and to help your future self and colleagues check work and reduce bugs.

Of course, she recommends DVC as a possible tool to help with this and notes,

fun fact, this is my second attempt at writing this post after my computer was bricked last week. I am now compulsively saving all of my work in the cloud.

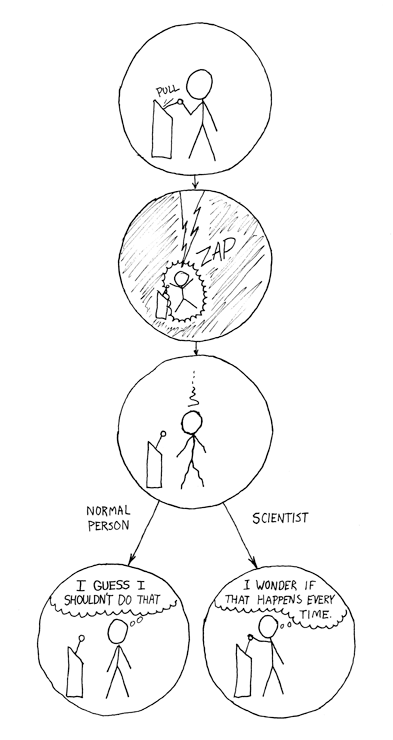

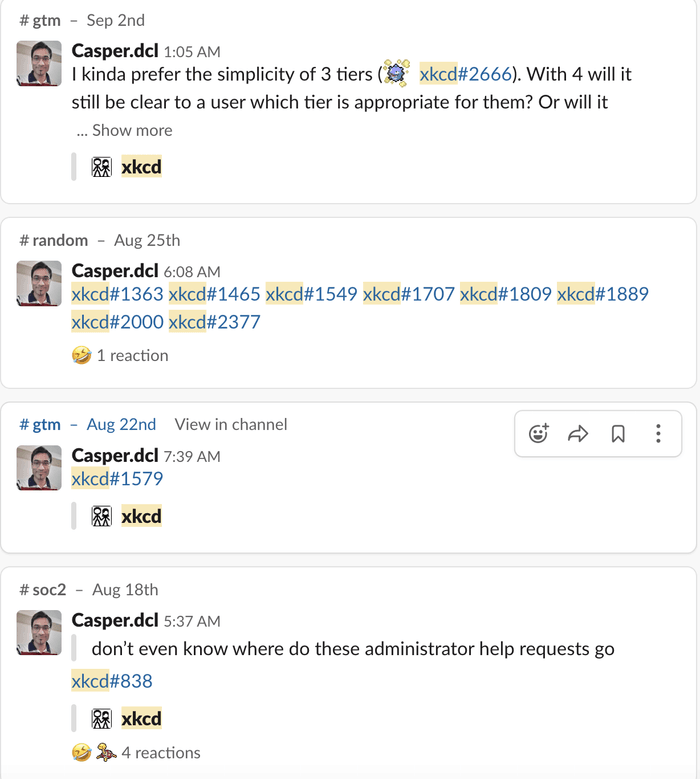

Haven’t we all been there? 🙋🏻♀️ She goes on to describe other contributors to irreproducible results including p-hacking and discusses other methods in addition to tooling that can help, such as preventing overfitting and using a sufficiently large dataset, and team review. All this and some fun xkcd comics can be found in the post including this one shown above!

Speaking of xkcd comics, Casper da Costa Luis, CML Product Manger, loves xkcd and regularly regales us with the comics in our internal Slack. He is also an expert at TL;DRing (yes, I just made that a verb). Part of his process in this excellence is to “suppress my latent desire to add a relevant xkcd comic.” As you can see, they do not appear every day. Self-discipline is a good thing.

😄 Iterative xkcd Lore

Company News

MLEM, MLEM, MLEM, this dog food is good!

So over the summer, you may have noticed that our blog has moved from the DVC website to the Iterative website. This is because as we now have many more tools than DVC, we wanted to make a blog home for them all. In this transition, we have also changed our internal blog writing process from being just Git-dependent to Git- and DVC- dependent, such that the writing is in Git, but the images are versioned with DVC and stored in a remote. 🤗

This admittedly may be like bringing a CNC router to a steak dinner (I feel like there should be a Myth Busters episode on this). But it will help both the DevRel team and the Websites team become intimately familiar with what our users feel when using our tools and potentially drive more feature improvements for you. In other words, we ❤️ you and we're really serious about making our tools better for you so you don't have to build them yourselves!

Alex Kim O'Reilly MLOps Course

- Week 1: Kick-starting an ML project

- Week 2: ML pipelines and reproducibility

- Week 3: Serving ML models as web API services

- Week 4: CI/CD and monitoring for ML projects

Head here to sign up for the course

LATAM AI

Gema Parreño Piqueras and our lead docs writer, Jorge Orpinel Perez, got to experience LATAM AI this year. Gema gave the talk Reproducibility and version control are important: Follow-up experiments with the DVC extension for VS Code. Both Gema and Jorge enjoyed the conference and meeting lots of people. Below you can see Gema with the winners of our DeeVee's Ramen Run Game. In the game, players have to roam DeeVee city answering questions to win Ramen and the highest place on the leaderboard. Get yourself to one of the conferences we are attending to play! See winners Miguel Moran Flores, Efren Bautista Linares and Rodofo Ferro below.

New Hires

Ronan Lamy joins the DVC team from Bristol, UK. He has a Ph.D. in physics and had been working as an open-source contractor as core dev of PyPy and HPy before joining Iterative. When he's not working Ronan enjoys exploring the many fine restaurants and great local beers of Bristol. Originally from France, Ronan recently shared with me that his friends and family back home don't believe that the food can be so good in Bristol, but he insists it is. Add it to your bucket list! When in Bristol, Ronan has recommendations for you!

Aleksei Shaikhaleev joins the Studio team as a backend developer. Originally from Russia, Aleksei has called Phuket, Thailand his home base for the last 10 years. When he's not working, he's really into surfing, skateboarding, motorcycles, and other fun activities like these. Aleksei also has a heart for rescuing cats, having adopted and caring for five stray cats at home!

David Tulga is our latest hire, and joins the LDB team from California as a Senior Software Engineer. He previously worked at Asimov and Freenome. When not working David enjoys a variety of outdoor activities such as Biking, Hiking, Kayaking, Sailing, and Astronomy.

David's arrival marks the 4th David on the team, putting the name David in a three-way tie with versions of Daniel and Alexander! Indeed over 20% of our workforce is named David, Daniel, or Alexander. 😅

Open Positions

Use this link to find details of all the open positions. Please share with anyone looking to have a lot of fun building the next generation of machine learning to production tools! 🚀 But don't apply if your name is David, Daniel, or Alexander. Unless you're willing to be nick-named, of course! It's getting confusing around here. 😂

✍🏼 New Blog posts

- Rob de Wit created a tutorial for using CML with Bitbucket, which CML now supports. Be sure to read it if Bitbucket is your Git provider of choice!

- Gema Parreño Piqueras' August Community Gems is full of great questions from the Community from our Discord server.

Upcoming Conferences

Conferences we will be attending through the end of the year:

-

Dmitry Petrov and Mike Sveshnikov will be giving a talk and workshop on our GitOps approach to a Model registry at TWIML Con on October 4-7 (On-line)

-

Dmitry Petrov will speak at ODSC West in San Francisco on November 1-3 on the same topic

-

Rob de Wit will be speaking at Deep Learning World - Berlin, October 5-6 with the talk Becoming a Pokémon Master with DVC: Experiment Pipelines for Deep Learning Projects

-

Casper da Costa Luis will be giving the talk Painless cloud orchestration without leaving your IDE at MLOps Summit - Re-work - London, November 8-9

-

Dmitry Petrov will be speaking at GitHub Universe on November 9-10 with the talk Connecting Machine Learning with Git: ML experiment tracking with Codespaces!

-

Finally, we will be participating in Toronto Machine Learning Summit - November 29-30 in Toronto, talks TBD

❤️ Tweet Love

We loved finding DVC and CML used for benchmarking and reporting at Huggingface thanks to the tip-off from Omar Sanseviero! Look out for more projects involving Hugginface and our tools coming soon!

@huggingface datasets uses @DVCorg & CML for benchmark and reporting 🥰 . More about the .yaml structure here --> https://t.co/NY5FMzjNuR Glad to discover common opensourceness @osanseviero ! 🤗🥹🦉

— Gema Parreño (@SoyGema) September 8, 2022

Have something great to say about our tools? We'd love to hear it! Head to this page to record or write a Testimonial! Join our Wall of Love ❤️

Do you have any use case questions or need support? Join us in Discord!

Head to the DVC Forum to discuss your ideas and best practices.